AI systems that analyze surveillance and communications data work by processing massive volumes of video feeds, audio streams, text messages, and network traffic through machine learning algorithms that detect patterns, identify anomalies, and extract actionable intelligence in real time. These systems combine computer vision for video analysis, natural language processing for communications monitoring, and predictive analytics to transform raw data into structured insights that would take human analysts weeks or months to produce manually. The global market for AI in video surveillance alone reached $6.51 billion in 2024 and is projected to hit $28.76 billion by 2030, reflecting the rapid adoption across government, enterprise, and public safety sectors.

A practical example of these capabilities appears in the NYPD’s Domain Awareness System, which integrates license plate reader data, facial recognition technology, gunshot detection sensors, and video feeds into a unified database that enables real-time monitoring across the entire city. This type of data fusion allows investigators to correlate events across multiple data sources instantly, rather than manually reviewing footage and records. This article examines how these systems function technically, their applications in law enforcement and enterprise security, the privacy and civil liberties concerns they raise, implementation considerations for organizations, and the regulatory frameworks emerging to govern their use.

Table of Contents

- How Do AI Surveillance Systems Process and Analyze Data?

- Communications Intelligence and Natural Language Processing Applications

- Government and Intelligence Applications of AI Surveillance

- Privacy Concerns and the Civil Liberties Tradeoffs

- Implementation Challenges and Common Deployment Pitfalls

- Regulatory Frameworks Shaping AI Surveillance Deployment

- How to Prepare

- How to Apply This

- Expert Tips

- Conclusion

- Frequently Asked Questions

How Do AI Surveillance Systems Process and Analyze Data?

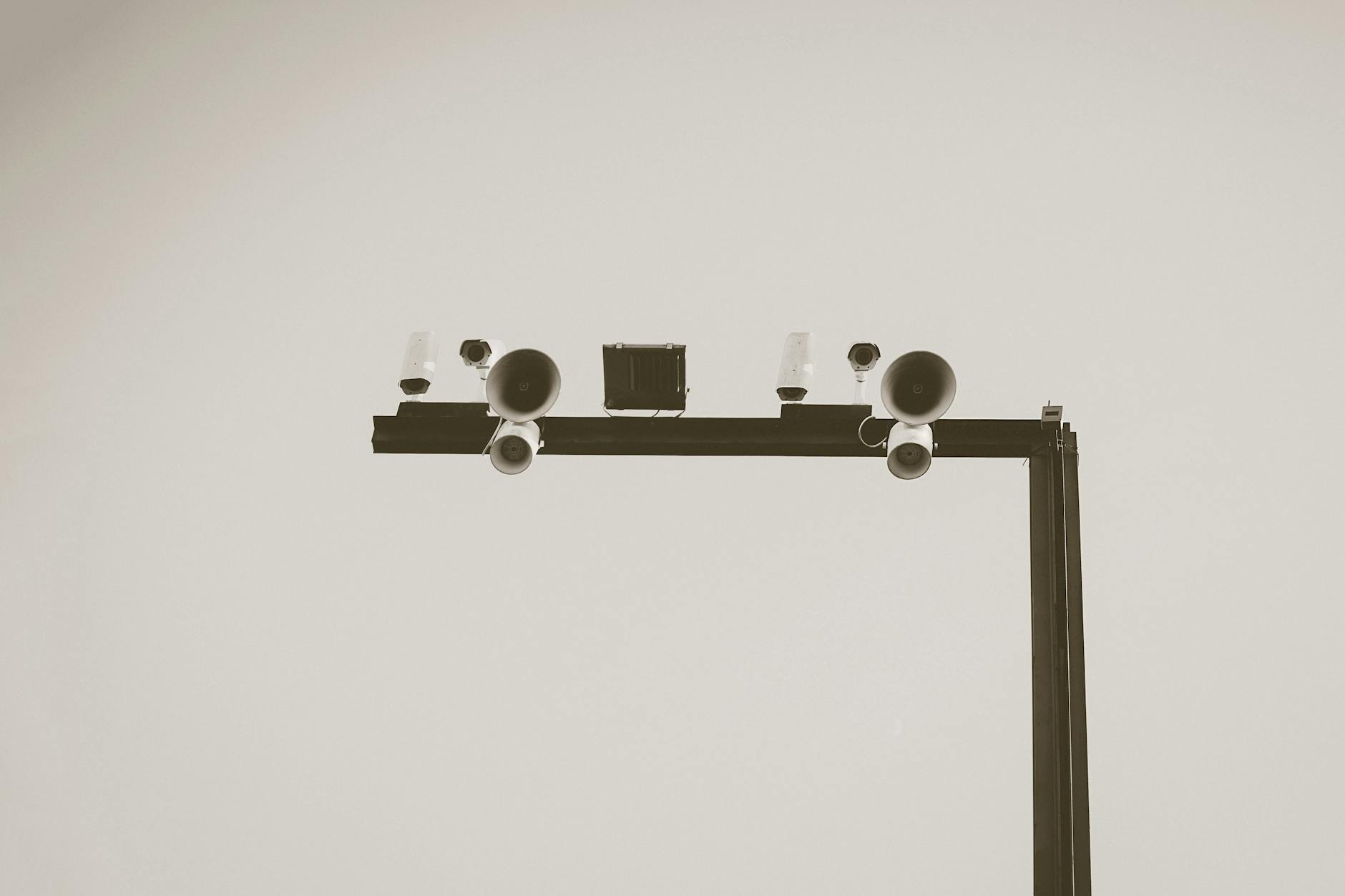

Modern AI surveillance platforms operate through a layered architecture that begins with data ingestion from multiple sources””security cameras, communication intercepts, social media feeds, and sensor networks””before applying specialized algorithms for different data types. Video analytics use convolutional neural networks trained to detect objects, recognize faces, track movement patterns, and identify specific behaviors like loitering or unauthorized access. Communications analysis relies on natural language processing to interpret text, transcribe audio, classify content, and flag potential threats within massive volumes of unstructured data. The processing happens either at the edge, directly on camera hardware, or in centralized cloud infrastructure. Edge-based systems reduce bandwidth demands and latency by performing initial analysis locally, sending only relevant events to central servers.

According to industry analysis, modern edge-based AI cameras can run multiple analytics simultaneously””object detection, license plate recognition, and safety compliance monitoring””all on the same device. However, edge processing limits the sophistication of analysis possible compared to cloud systems with greater computational resources. A key distinction exists between real-time alerting systems and forensic analysis tools. Real-time systems must process data fast enough to enable immediate response, prioritizing speed over depth. Forensic tools analyze historical data to reconstruct events, identify connections between individuals, and build comprehensive intelligence pictures. Many enterprise deployments use both approaches, with real-time alerts triggering deeper forensic analysis when warranted.

Communications Intelligence and Natural Language Processing Applications

Communications surveillance powered by AI extends far beyond simple keyword matching. Modern systems employ sophisticated NLP to understand context, sentiment, intent, and relationships between communicating parties. Law enforcement agencies increasingly rely on these tools to rapidly interpret massive volumes of police reports, body-worn camera audio, social media content, and intercepted communications. The technology automates tasks like report drafting, transcription, evidence classification, and threat detection, significantly reducing the manual workload for analysts. Enterprise compliance represents another major application area.

Financial services firms use AI-enabled surveillance to monitor employee communications for regulatory violations, insider trading indicators, and misconduct. Smarsh’s Noise Reduction Agent, for instance, applies AI during message ingestion to automatically identify and suppress low-risk content like spam and newsletters while preserving complete records for audit purposes. This approach addresses a fundamental challenge: organizations generate too much communications data for human review, but must maintain comprehensive archives for regulatory compliance. However, if the AI system is trained primarily on formal written communications, it may perform poorly when analyzing informal text messages, slang, code words, or conversations in multiple languages. Organizations deploying these systems must validate their effectiveness against the actual communication patterns present in their environment. The technology also struggles with highly contextual content where meaning depends on information the system cannot access, such as prior in-person conversations or shared knowledge between parties.

Government and Intelligence Applications of AI Surveillance

Government intelligence agencies represent the most sophisticated users of AI-powered surveillance and communications analysis. The NSA leads U.S. signals intelligence operations, applying machine learning to identify patterns in massive amounts of intercepted signals, recognize encrypted message structures, and detect anomalies in communication behavior. AI systems can now automatically classify, decode, and analyze data in real time, significantly reducing the time needed for human analysis””a capability that has transformed intelligence operations. The signals intelligence market reached $16.8 billion in 2024 and is projected to grow to $28.1 billion by 2034, driven primarily by AI and advanced sensor technologies.

North America leads globally, with the United States alone contributing $5 billion in 2024. These investments reflect the expanding volume of communications to monitor: 5G networks, satellite constellations, and IoT devices have dramatically increased the number of potential signals requiring analysis. Law enforcement applications have also expanded significantly. The FBI’s need for AI-powered video analysis emerged from the 2013 Boston Marathon bombing, when investigators faced the challenge of rapidly reviewing enormous amounts of video footage to identify suspects. Today, platforms like TRULEO provide AI agent capabilities that automate open source intelligence analysis, jail call network linking, cross-database intelligence gathering, and case brief generation. Multitude Insights recently secured $10 million to develop AI-powered intelligence sharing that helps agencies distribute and analyze critical information across jurisdictions in real time.

Privacy Concerns and the Civil Liberties Tradeoffs

The expansion of AI surveillance capabilities has generated substantial concern among privacy advocates and civil liberties organizations. Facial recognition technology demonstrates these tensions clearly: a National Institute of Standards and Technology study found leading facial recognition systems showed error rates up to 100 times higher for Black and Asian faces compared to white faces, leading to documented cases of wrongful arrests. The U.S. Commission on Civil Rights has warned that unregulated use poses significant risks to civil rights, particularly for marginalized communities. The tradeoff between security effectiveness and privacy protection remains fundamentally unresolved.

Real-time crime centers using AI can demonstrably reduce crime rates and emergency response times””one study suggested potential reductions of 30 to 40 percent in crime and 20 to 35 percent in response times. Yet the same systems enable mass surveillance that can chill free speech, target political dissidents, and normalize constant monitoring. In authoritarian contexts, these tools have been deployed to monitor protests, track minority groups, and suppress dissent. New technical approaches are emerging that attempt to circumvent privacy restrictions. Police and federal agencies have begun using AI models that track individuals through physical attributes like body size, clothing, and accessories””avoiding facial recognition bans while raising similar surveillance concerns. The ACLU has identified this as the first instance of a nonbiometric tracking system used at scale in the United States, warning it introduces new privacy concerns at a time when agencies are expanding monitoring of protesters and immigrants.

Implementation Challenges and Common Deployment Pitfalls

Organizations deploying AI surveillance systems frequently underestimate the infrastructure requirements and operational complexity involved. Enterprise video surveillance in 2025 demands robust network bandwidth for high-resolution video streams, substantial storage capacity for retention requirements, and computing resources for real-time analytics. Network micro-segmentation and zero-trust architecture have become essential to mitigate security risks, as surveillance systems themselves represent attractive targets for attackers seeking to compromise or evade monitoring. A critical limitation involves data quality and algorithmic bias. Researchers examining 13 police jurisdictions found strong evidence in nine that predictive policing systems had been trained on problematic data, perpetuating historical biases in arrest patterns.

When input data reflects discriminatory practices, AI systems amplify rather than correct those patterns. The Chicago Police Department suspended its predictive policing program following damning reports about data quality and effectiveness. Organizations must also address the human factors in AI-assisted surveillance. Technology alone does not improve security””effective deployment requires training programs that emphasize collaboration between AI tools and human operators. The most successful implementations in 2025 follow a pattern of specialized AI agents working together rather than attempting to replace entire analyst teams with generalist systems. Each agent becomes expert in a narrow domain, collectively making human analysts more effective rather than replacing their judgment.

Regulatory Frameworks Shaping AI Surveillance Deployment

The regulatory landscape for AI surveillance is evolving rapidly but remains fragmented. In the United States, fifteen states had enacted laws limiting police use of facial recognition by the end of 2024, with increasingly strong guardrails. However, comprehensive federal legislation has not materialized despite Congressional attention to the issue. The National Academies has explicitly called for federal action, noting that advances in facial recognition technology have outpaced laws and regulations.

The European Union has taken the most aggressive regulatory stance through its AI Act, which took effect in February 2025. Article 5 prohibits marketing or using AI systems to predict crime probability, effectively banning predictive policing applications. The Act also restricts facial recognition use strictly to law enforcement contexts, requiring vendors to pivot toward alternatives like post-event forensic analysis and on-device encryption for personal data. Organizations operating internationally must now navigate compliance requirements that vary significantly by jurisdiction, with the EU framework serving as an increasingly influential model for other regions.

How to Prepare

- **Conduct a thorough assessment** of current infrastructure, including camera placements and coverage gaps, network bandwidth and power availability, storage capacity and retention requirements, and integration needs with existing platforms.

- **Inventory data sources and establish data governance** to ensure the quality of information feeding AI systems””dirty data produces unreliable outputs regardless of algorithmic sophistication.

- **Define clear use cases and success metrics** before evaluating vendors, as surveillance AI capabilities vary dramatically and matching technology to actual operational needs prevents costly mismatches.

- **Establish an AI governance board** that includes security, legal, ethics, and compliance representatives to oversee implementation decisions and ongoing operation.

- **Develop training programs** that emphasize human-AI collaboration rather than replacement, ensuring staff understand both capabilities and limitations of the systems they will use.

How to Apply This

- **Start with high-value, low-risk applications** such as after-hours monitoring or compliance scanning of archived communications, building operational experience before expanding to sensitive real-time use cases.

- **Implement comprehensive logging and audit trails** for all AI-generated decisions, maintaining records that enable human review of system performance and support compliance with emerging regulations.

- **Establish feedback loops** between human analysts and AI systems, using analyst corrections to improve system performance over time while ensuring humans remain in the decision loop for consequential actions.

- **Review and update policies quarterly** as both technology capabilities and regulatory requirements evolve rapidly””governance frameworks established at deployment will require ongoing refinement.

Expert Tips

- Deploy specialized AI agents with narrow, well-defined responsibilities rather than attempting to build generalist systems that replace entire analyst functions””focused agents consistently outperform general-purpose alternatives.

- Maintain visibility into all AI agent activity across your environment by inventorying SaaS connections and mapping data access before implementing controls, as you cannot secure what you cannot see.

- Do not rely solely on vendor accuracy claims; conduct independent validation against your actual data and use cases, as performance varies significantly across environments.

- Build regulatory compliance requirements into deployment from the beginning rather than attempting to retrofit””the EU AI Act and similar frameworks impose requirements that are costly to address after the fact.

- Avoid deploying AI surveillance for use cases where human judgment remains essential for legal or ethical reasons, particularly decisions with significant individual consequences like employment or criminal justice involvement.

Conclusion

AI systems for surveillance and communications analysis have matured from experimental tools to operational necessities across government, enterprise, and public safety sectors. The technology enables processing volumes of data that would overwhelm human analysts, delivering real-time threat detection, pattern recognition, and intelligence extraction at scale. Market projections indicate continued double-digit growth as organizations face expanding data volumes and evolving security requirements.

However, deployment demands careful attention to technical infrastructure, data quality, privacy implications, and regulatory compliance. The documented risks of bias, the tensions between security and civil liberties, and the rapidly evolving legal landscape require organizations to approach implementation thoughtfully. Success depends not on technology alone but on governance frameworks, human-AI collaboration models, and ongoing validation that systems perform as intended without producing unacceptable harms. Organizations that invest in this foundation will realize the genuine benefits these technologies offer while managing the substantial risks they present.

Frequently Asked Questions

How long does it typically take to see results?

Results vary depending on individual circumstances, but most people begin to see meaningful progress within 4-8 weeks of consistent effort. Patience and persistence are key factors in achieving lasting outcomes.

Is this approach suitable for beginners?

Yes, this approach works well for beginners when implemented gradually. Starting with the fundamentals and building up over time leads to better long-term results than trying to do everything at once.

What are the most common mistakes to avoid?

The most common mistakes include rushing the process, skipping foundational steps, and failing to track progress. Taking a methodical approach and learning from both successes and setbacks leads to better outcomes.

How can I measure my progress effectively?

Set specific, measurable goals at the outset and track relevant metrics regularly. Keep a journal or log to document your journey, and periodically review your progress against your initial objectives.

When should I seek professional help?

Consider consulting a professional if you encounter persistent challenges, need specialized expertise, or want to accelerate your progress. Professional guidance can provide valuable insights and help you avoid costly mistakes.

What resources do you recommend for further learning?

Look for reputable sources in the field, including industry publications, expert blogs, and educational courses. Joining communities of practitioners can also provide valuable peer support and knowledge sharing.