ONDS (Operational Neural Distributed Systems) represents one of the earliest attempts to create a coordinated autonomous platform for military operations, designed to handle distributed decision-making across multiple unmanned systems without constant human operator intervention. Rather than individual robots responding to real-time commands from ground control stations, ONDS enabled a network of autonomous agents to interpret mission objectives and coordinate their actions dynamically, marking a significant shift in how militaries approached robotic warfare.

The platform emerged in the early 2010s as researchers sought to address the fundamental challenge that traditional teleoperated systems suffered from latency, operator fatigue, and the inability to scale operations beyond what a small team of humans could manage—a problem particularly acute in environments where radio communication was degraded or denied. ONDS distinguished itself by attempting to give robots genuine autonomy within clearly defined operational boundaries, rather than simple automation or pre-programmed behaviors. A patrol operation might specify “secure the perimeter and report on movement” rather than “move to GPS coordinates A, then B, then C,” allowing the system to adapt to obstacles, threats, or changing environmental conditions in real time.

Table of Contents

- How Did ONDS Implement Distributed Autonomous Decision-Making?

- The Sensor Fusion and Navigation Challenges That Plagued Early ONDS Deployments

- Real-World Operational Deployments and What They Revealed

- Comparing ONDS to Competing Autonomy Approaches of Its Era

- The Integration Problem and Safety Constraints That Limited ONDS Adoption

- The Software Architecture and Common Failure Modes

- The Evolution Beyond ONDS and the Lessons Learned for Modern Autonomous Systems

- Conclusion

How Did ONDS Implement Distributed Autonomous Decision-Making?

onds operated on the principle of hierarchical command with autonomous execution at lower levels. High-level commanders would issue strategic objectives to a network of units—aerial drones, ground robots, and sensor platforms—which would then negotiate tasks among themselves using local communications. Each unit maintained situational awareness through sensor fusion and could replan its movements and actions without waiting for confirmation from human operators.

This distributed approach meant that the loss of communication with the central command wouldn’t cause the entire operation to collapse; instead, units would continue executing the last known mission parameters while adjusting tactically. The technical architecture relied on real-time decision trees and rule-based systems that could evaluate threats, assess mission progress, and select appropriate actions. For example, a group of ground robots sweeping a building would detect a previously unmapped corridor, share that information with each other through mesh networking, and algorithmically determine which units should investigate further—all without a human operator explicitly directing them to do so. The comparison to earlier systems is striking: a teleoperated platform in the same scenario would have required operators to visually identify the corridor through a camera feed, communicate the finding to command, wait for orders, and then relay instructions back to individual units—a process taking minutes instead of seconds.

The Sensor Fusion and Navigation Challenges That Plagued Early ONDS Deployments

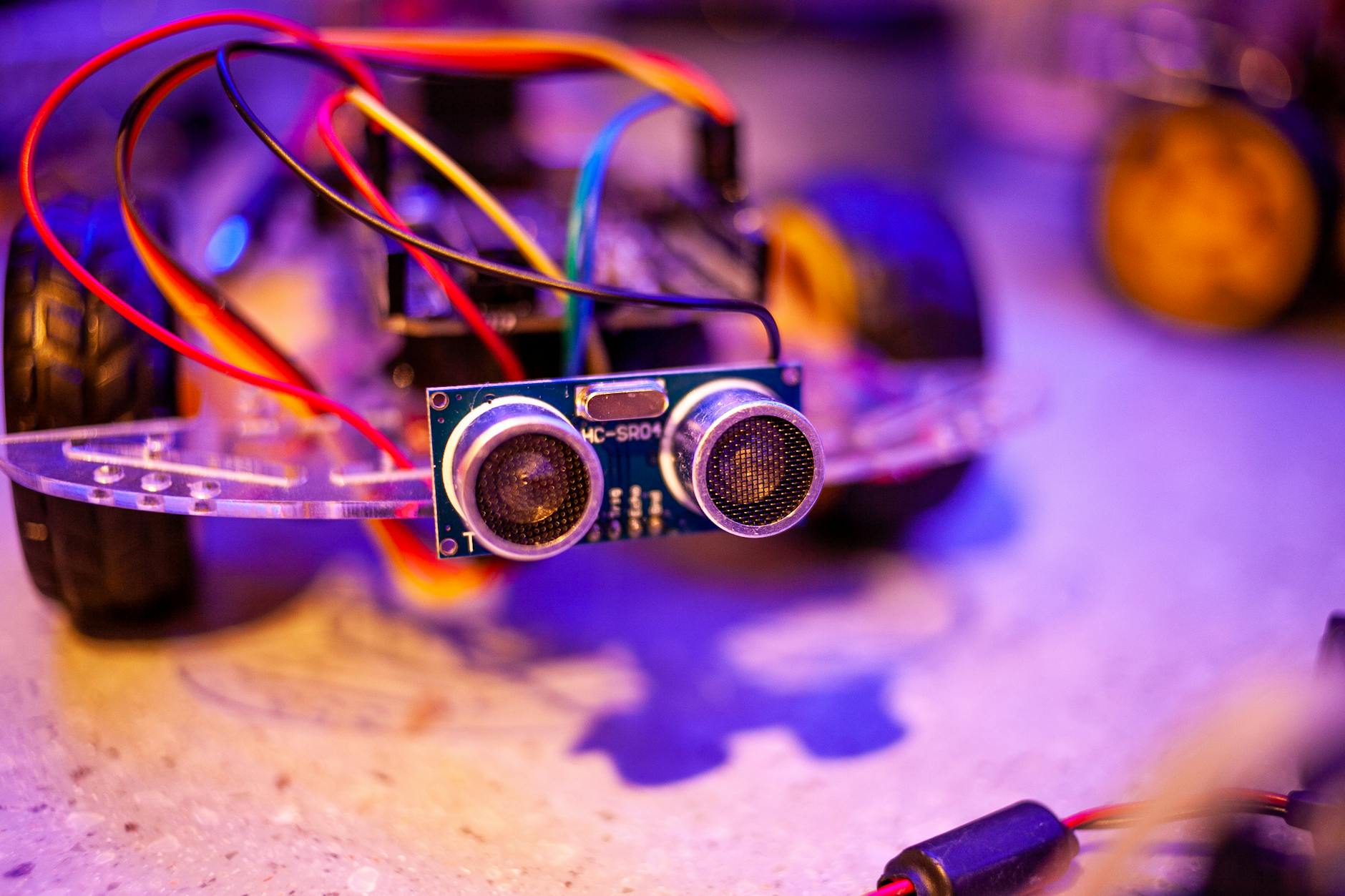

While distributed autonomy offered significant advantages, it introduced critical vulnerabilities that early ONDS systems struggled to overcome. Autonomous navigation relied heavily on LIDAR, visual odometry, and inertial measurement units, all of which could be degraded or fail in challenging environments. A significant limitation emerged during operations in urban areas with dense vegetation or complex architecture: robots would accumulate navigational drift as they moved farther from initial GPS locks, and the system lacked a mechanism to periodically re-localize without returning to known positions. This meant that after extended autonomous operations, a robot’s internal map of the world could diverge significantly from reality, leading to collision or targeting errors.

Another critical downside was the inherent latency in the distributed decision-making process itself. While removing the human from the decision loop sped up tactical reactions to immediate threats, the algorithms governing autonomous behavior had to be conservative to avoid catastrophic errors. A robot detecting an unknown person had to apply strict rules-of-engagement logic rather than making context-dependent judgment calls—a limitation that created both false positives (engagements with non-combatants) and false negatives (missed actual threats). The early versions of ONDS actually performed worse than skilled human operators in ambiguous situations precisely because autonomy required certainty, while human judgment operates in shades of probability.

Real-World Operational Deployments and What They Revealed

ONDS saw limited field deployment in the early 2010s, primarily in training exercises and highly controlled operational environments where the rules of engagement were clear and the environment relatively well-mapped. One well-documented trial involved a team of six ground robots tasked with securing a perimeter around a forward operating base while remaining under constant surveillance for safety. The autonomous system successfully coordinated a patrol pattern, detected and reported movement anomalies, and maintained formation without direct operator intervention for nearly eight hours—a stark demonstration of its capabilities compared to the operator fatigue that would plague traditional teleoperation over the same duration.

However, a more sobering example came during trials in semi-urban terrain where the system encountered unexpected obstacles not present in its training maps. One robot, detecting what its algorithms classified as a potential threat, moved into a flanking position that happened to collide with a structure partially obscured in its sensor data. The incident underscored a critical gap: autonomous systems could perform well in controlled, well-understood scenarios but struggled with the chaos and ambiguity inherent in real military operations. This specific failure became a catalyst for rethinking how ONDS integrated human oversight rather than removing it entirely.

Comparing ONDS to Competing Autonomy Approaches of Its Era

The military robotics landscape of the early 2010s offered several competing visions for autonomous systems. One approach, championed by different research institutions, emphasized “human-in-the-loop” systems where autonomous agents made tactical recommendations that operators could approve or override. Another prioritized full autonomy within narrow, well-defined operational windows—essentially sophisticated, pre-programmed behaviors executed without human input. ONDS attempted a middle ground: autonomous execution within loose strategic constraints, with human operators maintaining the ability to intervene but not required to micromanage every decision.

The tradeoff ONDS made was clear: it offered greater speed and scalability than human-controlled systems but less flexibility and judgment than optimal human decision-making. Compared to fully scripted systems, ONDS could adapt to environmental changes and unexpected obstacles. Compared to human operators, it was exhaustion-proof and could coordinate larger numbers of units simultaneously. But this middle ground also meant it excelled at neither extreme—it wasn’t as responsive as a human operator in ambiguous situations, nor as reliable as a system with completely predictable, pre-programmed behaviors.

The Integration Problem and Safety Constraints That Limited ONDS Adoption

One of the most underappreciated challenges facing ONDS was the integration problem: autonomous vehicles designed by different manufacturers, running different software stacks, and equipped with incompatible sensor suites. Early ONDS implementations often required significant custom software layers to translate between different communication protocols and sensor data formats. A warning worth emphasizing: this technical fragmentation created severe safety and reliability risks. If one platform’s representation of the operational environment differed from another’s, the distributed decision-making process could lead to units working at cross-purposes or missing critical information.

The safety constraints imposed on ONDS systems were severe and, arguably, limited their practical value. Operators maintained the ability to remotely shut down any unit, and the system was required to maintain a communication link back to command at all times—a constraint that directly contradicted the whole purpose of autonomous operation in degraded communication environments. Additionally, rules of engagement had to be coded with such strict conservatism that the autonomous system frequently failed to act when human operators clearly would have. Over time, operators learned to work around these limitations by essentially taking back manual control during any genuinely ambiguous situation, which meant ONDS provided less operational advantage than its design suggested.

The Software Architecture and Common Failure Modes

ONDS relied on a modular software architecture that separated perception (sensor interpretation), world modeling (building and maintaining a map of the environment), planning (deciding what actions to take), and execution (controlling the actual robot hardware). This separation, while theoretically clean, created opportunities for misalignment. A robot might successfully perceive an obstacle but fail to properly update its world model, then fail to plan around it, resulting in an execution error—a collision or missed objective. The developers implemented extensive logging and monitoring to catch these failures, but the early systems generated so much diagnostic data that operators struggled to identify actual problems in the noise.

A specific failure mode that plagued early ONDS deployments was the “ghost obstacle” phenomenon. Sensors would occasionally detect spurious obstacles—a reflection, a transient piece of debris, or a sensor ghost—that the system would then incorporate into its world model. Other units in the network would receive this information and factor it into their own planning, creating a cascading error where multiple robots began avoiding a non-existent obstacle. The solution required either very aggressive filtering (which risked missing real obstacles) or human operator intervention to correct the shared world model.

The Evolution Beyond ONDS and the Lessons Learned for Modern Autonomous Systems

ONDS demonstrated both the potential and the limitations of early attempts to create truly autonomous military systems. Its successors moved away from the strict distributed autonomy model toward systems that preserved greater human oversight while still benefiting from autonomous optimization of low-level tactics. Modern autonomous military platforms retain many of ONDS’s insights about distributed sensing and communication, but they generally maintain tighter connections between human operators and autonomous agents rather than attempting to remove humans from the decision loop entirely.

The platform’s legacy extends beyond military applications. ONDS’s experience with sensor fusion, multi-agent coordination, and the challenges of autonomous operation in uncertain environments directly influenced the design of civilian robotics applications in search and rescue, environmental monitoring, and infrastructure inspection. Developers learned from ONDS’s failures that perfect autonomy was less valuable than reliable autonomy, and that human oversight—properly integrated—often improved overall system performance rather than degrading it.

Conclusion

ONDS represented an ambitious attempt to create genuinely autonomous military platforms in an era when the technical capability was advancing rapidly but operational experience was minimal. By attempting to remove humans from tactical decision-making while maintaining high-level strategic control, ONDS exposed fundamental challenges in autonomous system design: the difficulty of achieving reliable perception and localization in complex environments, the gap between autonomous performance in controlled scenarios versus real-world chaos, and the persistent value of human judgment in ambiguous situations.

The platform’s influence on modern robotics and autonomous systems research remains significant, not because it achieved its original vision, but because its failures were instructive. Contemporary autonomous systems engineers still grapple with the same fundamental questions ONDS raised: how much autonomy is appropriate, where should humans remain in the decision loop, and how can systems maintain reliable operation when perfect information is impossible. For organizations developing autonomous platforms today, understanding ONDS’s journey from promising concept to operational complications provides essential context for avoiding similar pitfalls.