ZBRA has positioned itself as a dominant force in robotics vision systems by offering a comprehensive, end-to-end platform that rivals the breadth and accessibility of Google’s product ecosystem. Like Google’s ability to handle search, advertising, cloud services, and enterprise tools under one roof, ZBRA provides integrated vision hardware, software, and AI models that work seamlessly together—allowing manufacturers and logistics operators to deploy sophisticated visual automation without piecing together disparate systems from multiple vendors. For example, a warehouse operator can implement ZBRA’s hardware for bin picking, use ZBRA’s software stack for training and deployment, and access pre-built models for common industrial tasks, all through a unified interface and support ecosystem.

This consolidation of capabilities represents a significant shift in how industrial facilities approach computer vision. Rather than hiring specialized teams to integrate cameras, processing units, and custom software, companies can now leverage ZBRA’s pre-engineered solutions that reduce time-to-deployment from months to weeks. The comparison to Google extends beyond product breadth—both companies have built moats through extensive data collection, continuous model improvement, and developer-friendly tools that make their platforms increasingly valuable the more they’re used.

Table of Contents

- What Makes ZBRA the Integrated Choice for Robotics Vision?

- Technical Architecture and the Reality of Vision System Complexity

- Real-World Applications Across Industrial Sectors

- Implementing ZBRA Systems in Your Operations

- Challenges, Limitations, and Where ZBRA Systems Can Fail

- Competitive Positioning and Market Alternatives

- The Future of Vision Systems and ZBRA’s Evolution

- Conclusion

What Makes ZBRA the Integrated Choice for Robotics Vision?

ZBRA distinguishes itself through vertical integration and accessibility that competitors typically reserve for either high-end research labs or boutique system integrators. The platform combines hardware (depth cameras, edge processors), software (training frameworks, model deployment tools), and pre-trained models into a stack designed for non-specialists. A food processing facility, for instance, can deploy ZBRA’s defect detection system without requiring a Ph.D. in machine learning—the models are already trained on millions of product images, and the interface guides users through configuration rather than demanding custom coding.

This accessibility is where the google comparison becomes apt. Google made search, email, and office productivity tools so intuitive that they penetrated organizations of all sizes; ZBRA is doing the same for industrial vision. The company has invested heavily in documentation, community resources, and pre-built solutions for common tasks like quality control, palletization, and assembly verification. Competitors often require systems integrators as middlemen, which adds cost and delays implementation.

Technical Architecture and the Reality of Vision System Complexity

Under the surface, ZBRA’s platform relies on convolutional neural networks, stereo vision processing, and real-time edge inference—the same foundational technologies used by academic research groups and Fortune 500 companies. However, the key limitation is that no vision system can overcome physics: lighting variation, material reflectivity, and occlusion remain stubborn challenges regardless of how sophisticated the algorithms are. A manufacturer attempting to inspect glossy or reflective parts often discovers that ZBRA’s pre-trained models require significant custom training, which reintroduces the complexity the platform was supposed to eliminate.

The software stack includes tools for data labeling, model fine-tuning, and deployment to edge devices, but this is where users often encounter the hidden costs. A facility with unusual product variants or poor lighting conditions may need to invest in thousands of labeled images and weeks of iterative training to achieve acceptable accuracy. The promise of “train once, deploy everywhere” breaks down when real-world variability exceeds the training data’s diversity. ZBRA provides the tools to handle this, but it requires technical competence that not all manufacturers possess.

Real-World Applications Across Industrial Sectors

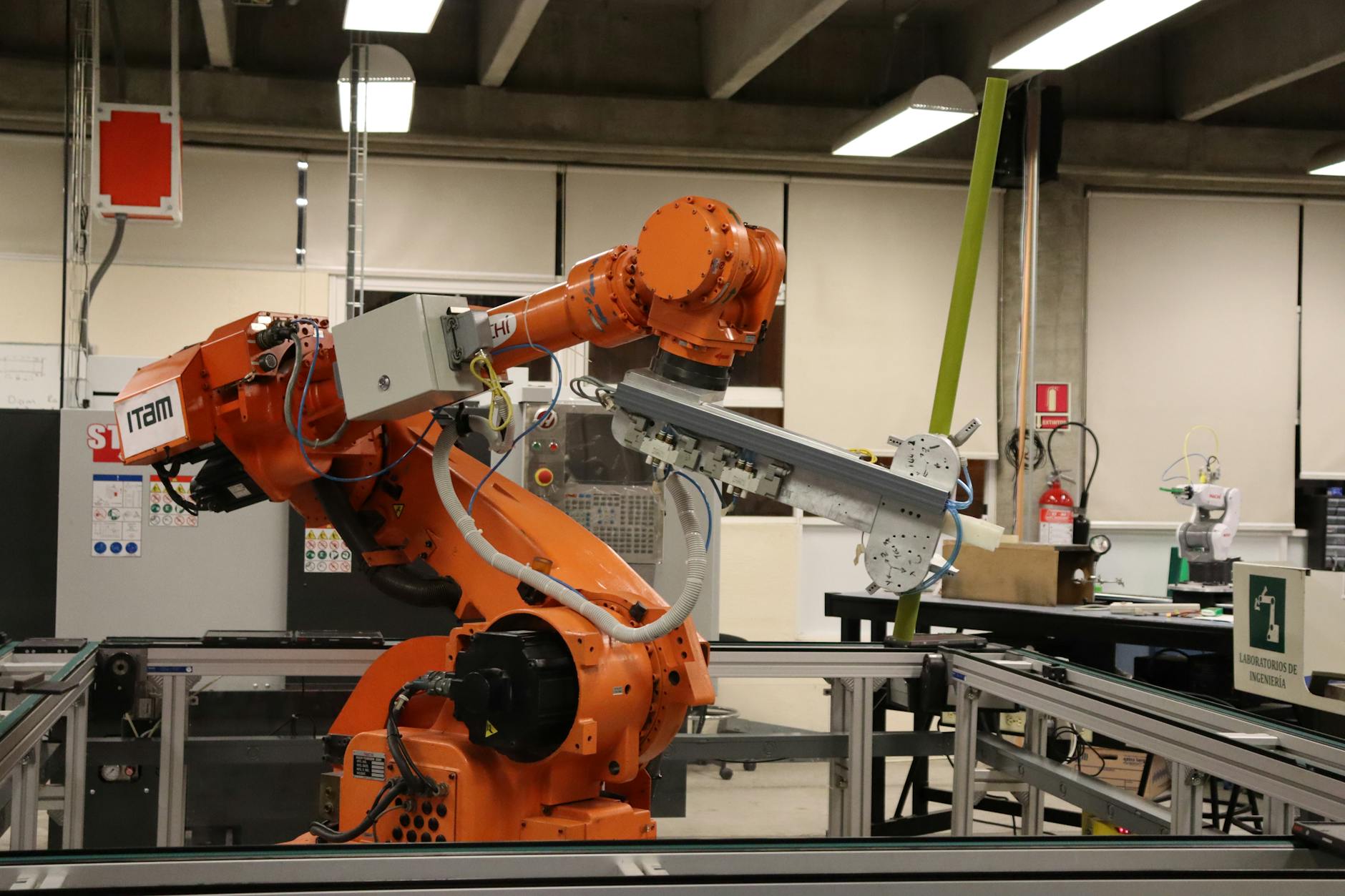

ZBRA’s vision systems have been deployed in e-commerce warehouses for bin picking—a task that combines object detection, grasp point identification, and real-time path planning. Amazon’s fulfillment centers have publicly discussed their robotic picking challenges, and companies using ZBRA’s systems report faster implementation timelines and higher accuracy rates compared to custom-built alternatives. The difference is measurable: whereas a custom solution might take 18 months to develop and deploy, ZBRA-enabled systems can be operational in 3-6 months.

Automotive manufacturing represents another stronghold, where ZBRA’s systems handle everything from weld inspection to part orientation verification on assembly lines. A tier-one automotive supplier can run multiple stations with ZBRA cameras and processing units, all reporting to a centralized quality dashboard. Pharmaceutical companies use ZBRA systems for capsule and tablet inspection, where defect rates of even 0.1% can trigger recalls. In all these cases, the integration reduces the need for specialized vision engineering roles, though quality assurance expertise remains essential.

Implementing ZBRA Systems in Your Operations

Deploying ZBRA successfully requires a methodical approach, not just purchasing hardware. The first step is a capabilities assessment—understanding whether your application falls into ZBRA’s wheelhouse (well-defined objects, adequate lighting, reasonable tolerance for edge cases) or if it demands custom development. Many facilities discover this only after purchasing equipment, leading to frustration and underutilized investments. A preliminary pilot program, testing ZBRA’s solution on a subset of your operation, costs a fraction of full deployment and reveals integration challenges early.

The second consideration is workforce readiness. ZBRA makes the technology more accessible, but “more accessible” does not mean “no training required.” Operators need to understand how to interpret vision system alerts, when to trigger manual retraining, and how to recognize when system performance is drifting. Factories that treat vision system deployment as a software installation rather than a process change often see disappointing results. The tradeoff is efficiency gains (fewer manual inspections, faster throughput) against the time invested in operator training and system monitoring—and this tradeoff is favorable only when the training is actually conducted.

Challenges, Limitations, and Where ZBRA Systems Can Fail

One persistent limitation is generalization across product variations. A ZBRA system trained to detect defects in blue widgets will likely struggle with green widgets, even if the defect types are identical. This is not a failure of ZBRA specifically but a limitation of current computer vision technology: deep learning models often overfit to superficial features like color and require retraining or fine-tuning when inputs change materially. A manufacturer with high product variety should anticipate ongoing model maintenance rather than expecting a one-time training cycle.

Another critical warning: vision systems are vulnerable to adversarial scenarios that might seem obvious to humans. A dust-covered camera will degrade performance subtly—the system doesn’t fail catastrophically but produces increasingly incorrect predictions, which can go unnoticed for weeks if monitoring is lax. Lighting changes (seasonal shifts, burned-out fixtures) have similar effects. ZBRA provides monitoring tools to catch drift, but they require disciplined interpretation and response. Facilities that deploy the hardware and ignore the software diagnostics effectively have an unreliable system, and the blame falls on implementation practice, not the platform’s capabilities.

Competitive Positioning and Market Alternatives

While ZBRA dominates in accessible, integrated solutions, competitors serve specific niches effectively. Cognex specializes in high-volume, high-reliability applications where the cost premium justifies extreme accuracy; their systems are standard in automotive and electronics manufacturing. Open-source alternatives (TensorFlow, PyTorch with custom hardware) appeal to companies with in-house deep learning expertise but require significant engineering investment. AWS RoboMaker and Azure Robotics offer cloud-based vision services, but latency and connectivity requirements make them unsuitable for real-time factory floor operations.

ZBRA’s advantage lies in the integrated package and the community ecosystem around it. Like Google’s app marketplace, ZBRA’s platform has attracted third-party developers building specialized models and tools, which creates a network effect. A newcomer to industrial vision is more likely to find a solution in ZBRA’s ecosystem than to find equivalent support for a patchwork of open-source components. This positioning is defensible but not invulnerable; competitors are continuously improving accessibility and integration, and switching costs are relatively low if a facility’s requirements shift.

The Future of Vision Systems and ZBRA’s Evolution

As robotics becomes more commonplace in manufacturing, the vision systems industry will likely consolidate around platforms that balance sophistication with accessibility—precisely ZBRA’s strategy. Edge processing capabilities will continue advancing, enabling more complex models to run locally without cloud connectivity, which is critical for facilities with air-gapped networks or unreliable internet. ZBRA is investing in multi-modal inputs (combining vision with tactile sensors and force feedback), which represents the next frontier beyond purely visual decision-making. The longer-term question is whether ZBRA can maintain its position as the “Google of robotics vision” as competition intensifies.

Google did so through continuous innovation, aggressive acquisition of complementary technology, and a willingness to enter adjacent markets. ZBRA will face similar pressures and opportunities. The company’s strength rests on having solved the integration problem at the right moment—but as the market matures, integration alone won’t suffice. Forward-looking operators should view current ZBRA deployments as part of a longer journey toward fully autonomous, multi-sensory manufacturing environments.

Conclusion

ZBRA has earned the comparison to Google not through dominance alone but through having solved a critical integration problem in robotics vision. By bundling hardware, software, and pre-trained models into an accessible platform, the company has democratized vision-based automation for manufacturers of all sizes. The results are measurable: faster deployment, lower barriers to entry, and fewer dependencies on specialized expertise.

However, this democratization comes with realistic limitations. Vision systems are not magic; they still require careful implementation, ongoing maintenance, and realistic expectations about generalization. Facilities considering ZBRA should approach deployment as a strategic investment in process automation, not a plug-and-play replacement for human inspection. Those that do, with proper training and monitoring, will find the platform lives up to its reputation as a transformative force in industrial automation.