GRRR, the stock ticker for Gorilla Technology, has emerged as a significant player in artificial intelligence infrastructure deployment, though it’s an established technology company rather than a startup operating under the “Tesla of AI” brand. In March 2026, Gorilla Technology signed agreements to deploy approximately 640 NVIDIA HGX B200 servers carrying over 5,000 GPUs in India, a move that positions the company as a serious contender in the global race to build AI computational capacity. This infrastructure expansion demonstrates how legacy technology firms are adapting to the AI era, competing directly with newer entrants in the high-stakes business of providing the backbone that powers advanced AI systems.

The comparison to Tesla—a company that disrupted its industry and achieved rapid valuation growth—reveals something important about the current state of AI infrastructure. Rather than being a startup with a revolutionary new technology, GRRR represents the more traditional path: an existing corporation deploying proven hardware at massive scale to capture opportunity in an emerging market. This approach has distinct advantages and limitations compared to pure-play AI infrastructure startups.

Table of Contents

- What Does GRRR Actually Do in the AI Infrastructure Space?

- The Reality of AI Infrastructure as a Business Model

- The India Deployment and Regional AI Infrastructure Challenges

- Comparing Gorilla Technology’s Approach to Pure-Play AI Competitors

- The Hidden Risk in GPU Dependency

- How GRRR Differs from Established Cloud Providers

- The Uncertain Future of Infrastructure Advantage

- Conclusion

What Does GRRR Actually Do in the AI Infrastructure Space?

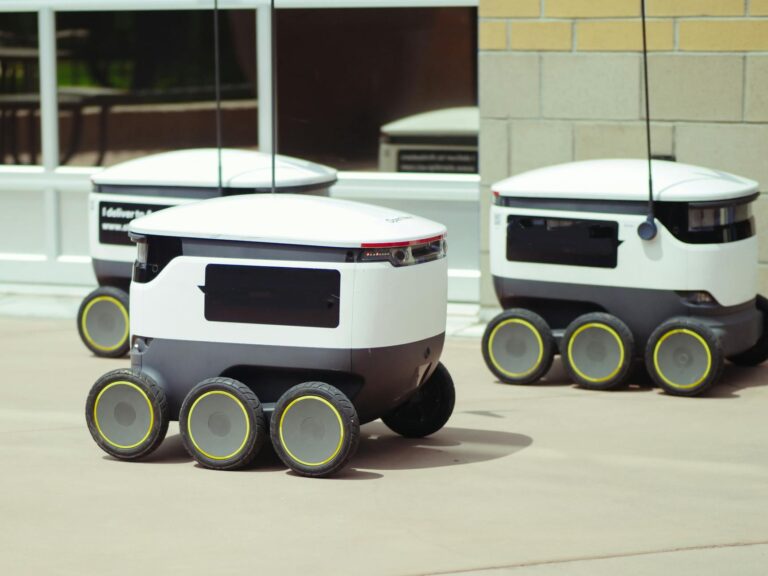

Gorilla Technology’s recent deployment strategy centers on providing computing power for artificial intelligence workloads at scale. The 640 nvidia HGX B200 servers represent industrial-grade hardware designed for enterprise AI applications, machine learning training, and large-scale inference operations. This is not consumer-facing technology; it’s the kind of infrastructure that powers data centers, research institutions, and companies building AI systems.

The India deployment specifically targets the growing demand for AI computational resources in Asia, where regulatory environments and labor costs create different dynamics than in North America or Europe. The company’s December 2025 strategic minority investment in Astrikos.ai signals intent to integrate software and infrastructure strategies. Rather than selling commodity computing power, Gorilla Technology is positioning itself as a provider of complete AI infrastructure solutions. This is a meaningful distinction—the “Tesla” comparison works better when companies control multiple layers of the value chain, from hardware selection through software optimization.

The Reality of AI Infrastructure as a Business Model

The infrastructure play in AI is fundamentally different from the technology disruptions Tesla represented. Computing power is ultimately a commoditized offering—the servers are manufactured by NVIDIA, the underlying chips are the same across all buyers, and the differentiator becomes deployment expertise, cost efficiency, and integration capabilities. This means grrr faces direct competition from hyperscalers like Amazon Web Services, Google Cloud, and Microsoft Azure, which have vastly larger balance sheets and customer bases. A critical limitation exists in the market timing and capacity constraints.

The NVIDIA H100 and B200 GPU shortage has been a defining feature of 2024-2026, with demand far exceeding supply. Gorilla Technology’s ability to secure 5,000 GPUs is noteworthy, but it also highlights the bottleneck: the company is limited by NVIDIA’s production capacity, not by its own ability to deploy. When the shortage eventually ends—likely in late 2026 or 2027—the competitive advantage of having secured hardware during scarcity disappears. At that point, GRRR’s success depends on operational excellence, pricing discipline, and customer relationships rather than exclusive access to scarce resources.

The India Deployment and Regional AI Infrastructure Challenges

The India deployment represents a specific response to regional demand patterns that differ from Western markets. India has seen explosive growth in AI research, startup activity, and enterprise adoption, but lacks sufficient domestic AI infrastructure. By positioning 640 servers in India, Gorilla Technology gains several advantages: regulatory alignment with local requirements, reduced latency for India-based companies, and relationships with emerging AI organizations that lack access to Western cloud providers.

However, this regional play also reveals constraints. Infrastructure deployments in India face operational challenges—power grid reliability, cooling requirements in tropical climates, and the need for local teams to manage and maintain equipment. The investment in Astrikos.ai partly addresses this by providing local expertise and software optimization for the Indian market. This contrasts with how Tesla built its Shanghai factory to serve China; Gorilla Technology is partnering rather than fully controlling its India presence, a more conservative but also more complex approach.

Comparing Gorilla Technology’s Approach to Pure-Play AI Competitors

The distinction between GRRR and a Tesla-like AI company becomes clear when comparing strategies. Companies like Anthropic, OpenAI, and even newer infrastructure firms like Lambda Labs own their computational infrastructure as a strategic asset but don’t sell access to it—they use it to build products. Conversely, dedicated infrastructure providers like CoreWeave and Lambda Labs sell computing access but don’t try to build consumer-facing AI products. Gorilla Technology sits in the middle: it’s deploying infrastructure at scale while also investing in software plays (Astrikos.ai), a hedging strategy that lacks the focus of pure competitors.

The tradeoff is worth examining. By maintaining the infrastructure business while making strategic investments in software, Gorilla Technology avoids overcommitting to a single bet on which AI applications will be commercially viable. If certain AI workloads become standard, the infrastructure side keeps the company profitable. If software optimization becomes critical, the Astrikos.ai investment provides exposure. The downside is that this approach may not generate the explosive valuations or market dominance of a company that commits fully to a single strategic direction.

The Hidden Risk in GPU Dependency

One significant limitation that deserves explicit attention: Gorilla Technology’s entire AI infrastructure business depends on NVIDIA’s continued dominance in GPU manufacturing. The company owns neither the chips nor the manufacturing process. If AMD’s MI300 series gains market adoption, or if custom silicon from major cloud providers improves, GRRR faces commoditization pressure from multiple directions. Custom chips from Google (TPUs), Amazon (Trainium, Inferentia), and others are already capturing workload-specific markets.

This dependency creates a warning for investors and customers alike. The company’s profitability and competitive position are largely determined by factors outside its control—NVIDIA’s pricing, production capacity, and product roadmap. The “Tesla of AI” framing breaks down here: Tesla manufactures its own batteries and motors, giving it vertical integration advantages that Gorilla Technology cannot match in the GPU space. For customers, this means evaluating whether GRRR’s services offer genuine advantages in cost, deployment speed, or software integration sufficient to justify switching from established hyperscalers.

How GRRR Differs from Established Cloud Providers

Gorilla Technology’s competitor set includes entrenched players with massive existing customer bases and infrastructure investments. AWS, Google Cloud, and Azure have lower cost structures due to scale, existing relationships with enterprises, and integrated services across computing, storage, and networking. GRRR must differentiate through specialized focus—becoming excellent at AI infrastructure deployment specifically—rather than trying to match the full service portfolios of hyperscalers.

The advantage of this focused approach is speed and specialization. A startup or research organization needing to spin up GPU clusters may prefer GRRR’s streamlined process to navigating the complexity of AWS console or Google Cloud’s vast options. The disadvantage is that once hyperscalers prioritize AI infrastructure in their pricing and service simplification, they can undercut specialized players on price while offering better integration with their existing ecosystems.

The Uncertain Future of Infrastructure Advantage

Looking forward, the AI infrastructure business will likely consolidate around a few winners, probably including the hyperscalers themselves but also possibly specialized providers that achieve sufficient scale and differentiation. Gorilla Technology’s success depends on whether companies and researchers prefer to use specialized AI infrastructure providers or whether they migrate to hyperscaler offerings as those services improve.

The margin between these outcomes determines whether GRRR becomes a profitable stable business or faces declining competitive position. The “Tesla of AI” comparison ultimately misses the mark because infrastructure provision, no matter how well executed, is fundamentally a different business from product innovation and vertical integration. Gorilla Technology may become a highly successful, profitable company in AI infrastructure—but that success looks more like the history of established telecom or network equipment providers than Tesla’s disruptive trajectory.

Conclusion

GRRR (Gorilla Technology) represents an important but often overlooked player in AI infrastructure provision. The company’s March 2026 deployment of 640 NVIDIA HGX B200 servers in India and its investment in Astrikos.ai demonstrate a pragmatic approach to capturing opportunity in the AI infrastructure market. However, the company faces fundamental constraints: dependence on NVIDIA for hardware, competition from entrenched hyperscalers, and the commoditization pressures inherent in providing computing capacity.

For organizations evaluating AI infrastructure providers, GRRR offers potential advantages in focused expertise and deployment speed, but should be weighed against the broader service offerings and cost structures of established cloud providers. The company’s future depends less on being transformative like Tesla and more on executing efficiently in a competitive, consolidating market for computing infrastructure. Understanding these limitations and opportunities is essential for anyone evaluating infrastructure partners in the AI era.